I need to tell you something the data security industry doesn't want to admit.

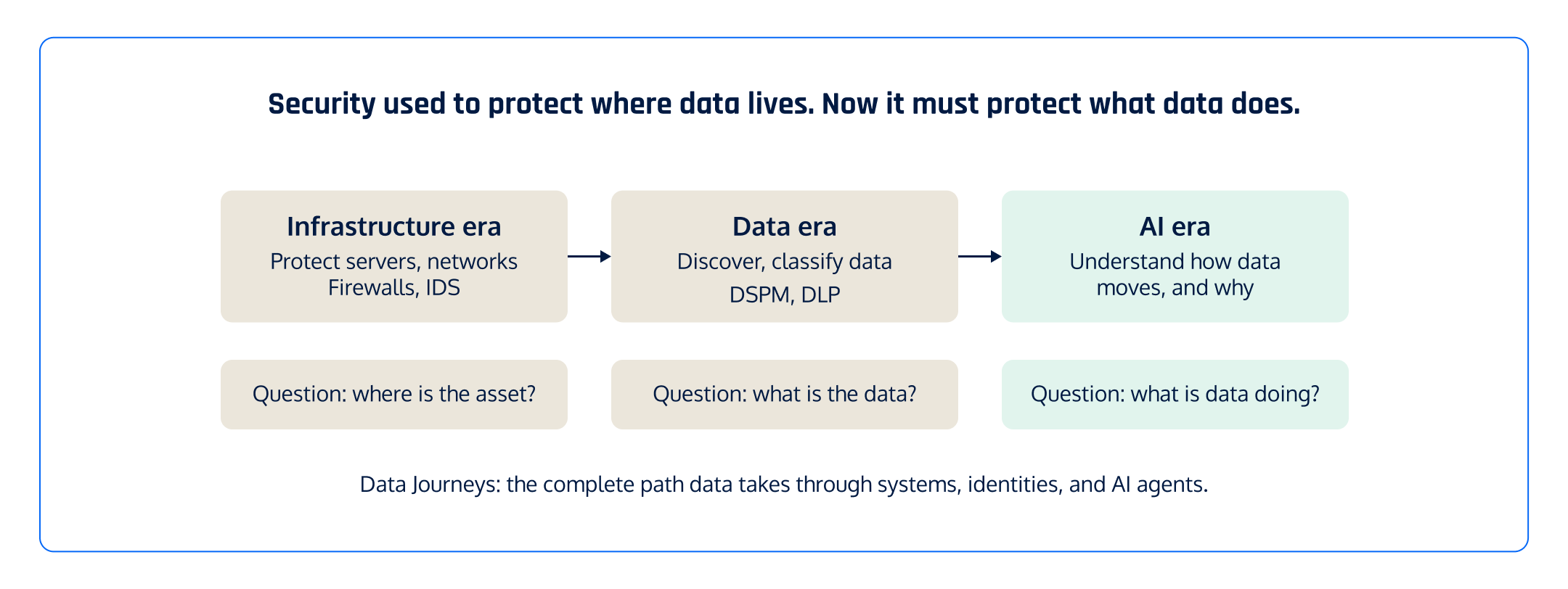

Almost every data security product today is built around the same incorrect assumption: if you can see the important assets (Crown Jewels), you can protect them. Find the sensitive data. Classify it. Prioritize alerts. Put locks on it.

That foundational assumption is flawed. And it's getting more wrong every day as we operate in the AI era.

The core mistake

Today's security tools answer object questions.

- Where is the data?

- Which bucket has PII?

- Which identity has access?

- These are useful questions.

But they're the wrong unit of analysis.

Modern systems don't fail because of objects. They fail because of interactions between objects.

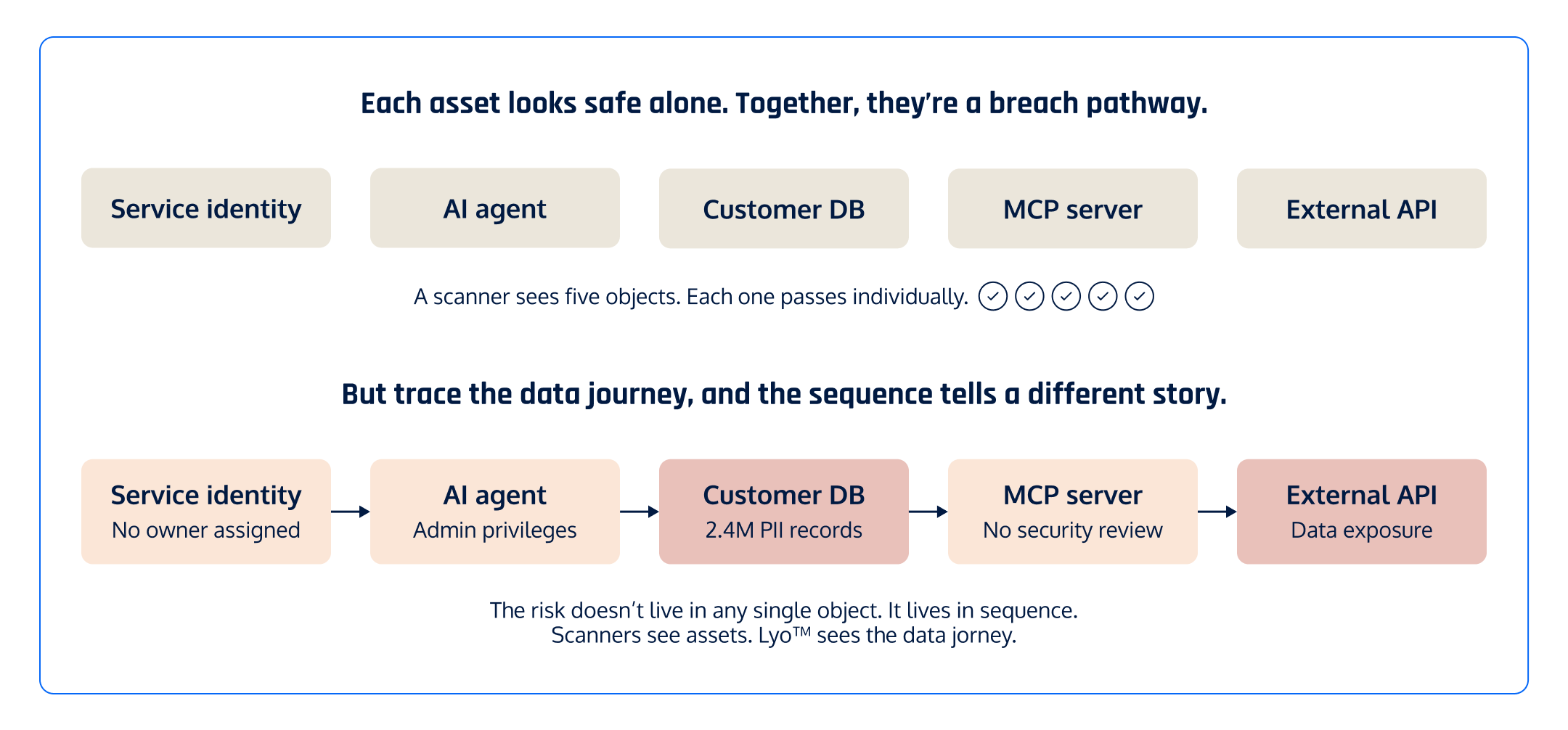

A database with customer PII might be properly secured. A service identity with admin privileges might be authorized. An AI agent calling an external API might be functioning as designed.

None of those are dangerous in isolation. But connect them:

That's not a list of findings. That's a breach pathway and compound data risk. And no scanner in the market was built to see it.

The risk doesn't live in the database. It lives in the dataflow sequence. And the sequence only becomes visible when you trace the full data journey.

Why scanners can't fix this

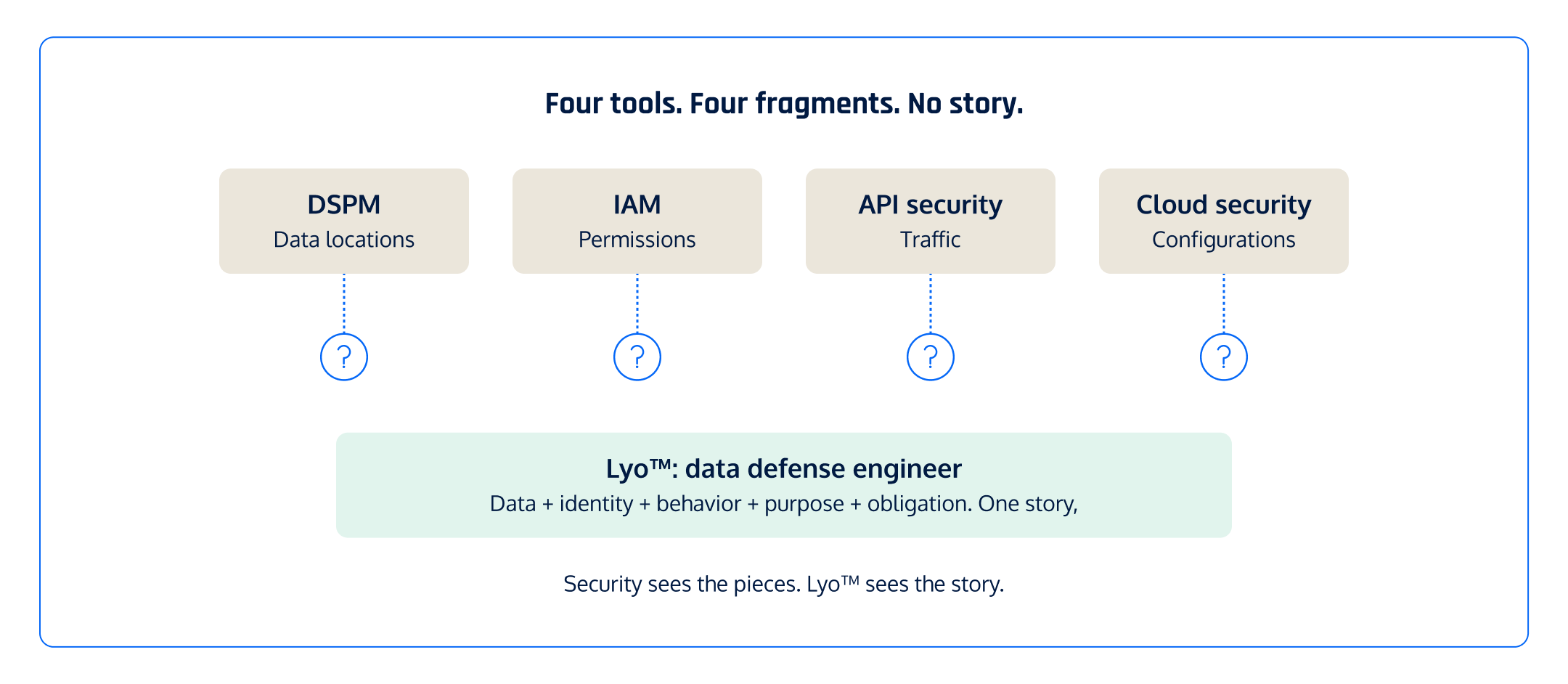

The security industry has built excellent tools for examining individual layers:

This worked when software was predictable. You wrote code, shipped it, and it did what you designed it to do. Security was about finding the misconfigured object and fixing it.

That world ended when AI agents started making decisions with your data.

AI agents don't follow fixed instructions. They choose which system to access, which tool to call, which data to retrieve. Their workflows aren't static. They're dynamic graphs of actions that change with every execution. The number of possible sequences through your systems is exploding, and traditional scanning tools were never designed to understand any of them.

You can't solve a sequence problem by scanning objects faster for data classification (often the value described by DSPM providers)

Context is the only moat

Every vendor in our space is racing to add more coverage. Scan the cloud. Scan the code. Scan the AI models.

Coverage is necessary. It is not sufficient for data security today.

The real question is not "can you see the data?" The real question is "do you understand what the data is doing, who is accessing it, and why?"

That's context. And context is what turns a finding into an action.

Without context, you get: "sensitive data found in production database." With context, you get: "This AI agent has admin access to 2.4 million customer records, connected through an unreviewed MCP server, with no data processing agreement, violating GDPR Article 5(1)(f), and it can read, write, and delete that data right now."

One is a finding. The other is a decision you can act on in seconds.

What we built instead

We didn't build a better scanner. We built a data defense engineer.

Today, at RSAC 2026, we're making Lyo commercially available.

Lyo is the first AI-native intelligence that traces every data journey across your environment and attaches the context that makes every finding actionable.

Lyo does what no scanner was architected to do:

It sees compound risk. Scanners assess assets one at a time. Lyo maps the relationships between them. An AI agent alone may be fine. A database with PII may be secured. But that agent with admin access to that database, through an unvetted MCP server, using an unmonitored service identity? That's a compounding threat that only becomes visible when you trace the full sequence.

It understands the why. Every finding comes with the full context chain: what's wrong, why it's wrong, who's involved, what's impacted, and what to do. No stitching across four tools. No investigation tax.

It acts at machine speed. When a developer commits code at 2 AM that routes customer data to an unapproved API, Lyo catches it before production. A scanner waits until Monday. Lyo never waits.

You can just ask it. "Which AI agents can reach customer PII in production?" "What changed in our data flows since Tuesday?" Plain English in. Full context out. No query builder.

The math problem

There's a structural issue with the scanner model that the industry avoids because it's uncomfortable.

Scanners find problems. Humans fix problems. More data means more findings means more humans needed. The cost scales linearly.

That math worked when data volumes grew slowly. It breaks in the age of agentic AI, where data is being created, transformed, and multiplied at machine speed.

You can't hire your way out of this. You need an engineer that understands the full context and acts on it.

See it for yourself

If you're a security operator, CIO, or CISO reading this, ask yourself one question about your current tools: do they tell you the what, or do they tell you the why?

If it's just the what, you have a visibility problem disguised as a security program.

We're at RSAC this week, March 23 through 26 at Moscone Center. Come see Lyo in action. Ask it a hard question. Watch it trace the full data journey and show you the story in seconds.

Or book a demo.

The scan isn't what protects you. The story is.

And there's no security company that understands data better than us.