Your AI security expert. From AI asset inventory to AI data defense.

Continuously discover every model, agent, and MCP server that touches your data — and understand exactly what it's doing, who it's acting as, and whether it's safe.

AI is scaling faster than you can follow

Organizations are deploying AI agents, models, and third-party AI tools at unprecedented speed. But CISOs lack a unified view of what these systems can access, what data they're touching, and how risks occur in real time.

No central view of AI and how it interacts with data

AI agents, models, and MCP servers are deployed across teams without centralized visibility — creating shadow AI that interacts with your existing assets in ways no single tool can see.

Overprivileged AI agents create compound risk

Compromised AI agents with administrative access to sensitive data can delete, expose, or exfiltrate information,becoming insider threats operating at machine speed.

Third-party AI supply chain risk growing fast

MCP servers give AI agents trusted access to external services and data — and the ecosystem is expanding faster than traditional vendor assessments can keep up with.

The AI security platform that thinks with you

Traditional AI security tools inventory AI assets and flag configurations. Relyance AI continuously tracks what every AI agent is doing with your data — correlated with identity, infrastructure, and policy — so you understand risk at the intersection, not in isolation.

Every AI asset that can reach your data — mapped continuously

Ditch gateway-limited visibility. Relyance AI discovers across code and cloud — including shadow AI, ephemeral agents, and MCP servers that never pass through a centralized control.

Always-current AI asset inventory — models, agents, MCP servers, RAG databases, pipelines

Shadow AI and rogue agent detection — including SaaS-embedded and code-level integrations

Correlated with data and identity — not just asset lists

Every AI data flow arrives with the full context-chain already assembled

Not "an AI agent has access to customer PII." Instead: which agent, acting as which identity, reaching which data, through what path — and whether that combination is policy-compliant, cross-border, or contractually authorized.

Powered by Data Journeys™ — connecting data, identity, and AI behavior

Runtime vs. contract alignment — verify what's actually happening against your DPA

Compound risk surfaced automatically — no manual reconstruction across multiple tools

From AI risk identified to AI risk resolved — in seconds, not weeks

Eliminate the investigation tax. A unified policy view across AI, data, and identity posture — with severity and context already assembled — lets Lyo™ answer any AI risk question in seconds and suggest the right fix.

Unified policy violations view — across AI posture, data posture, and identity posture

Ask Lyo™ in natural language — "Which AI agents can reach customer PII in production?"

Code-level remediation — find the exact commit, and know what to do

Real-time data flow intelligence across code, cloud, and AI

Trace every flow from code to cloud to AI model in real time. The Data Exposure Graph connects data sensitivity, identity permissions, and AI agent behavior to surface compound risks that only emerge at the intersection. Unified obligations mapping ties every flow to its legal, contractual, and policy requirements automatically.

How does Relyance AI secure AI systems?

Relyance AI secures AI systems through four layers. First, it discovers all AI assets including agents, models, MCP servers, and third-party tools alongside non-AI data assets in a unified inventory. Second, it maps relationships between AI agents and data to surface compound risks using identity intelligence. Third, it monitors continuously with 24/7 policy-based alerting and delivers recommendations for remediation that explain the risk context and how to fix it. The platform is agentless, API-first, and powered by Lyo™, the world's first AI-native data defense engineer.

Relyance AI vs. traditional AI-SPM tools Traditional AI-SPM tools focus on discovering and classifying AI assets. Relyance AI goes beyond posture awareness to deliver context (why a risk exists), monitoring (automated guardrails), and recommend remediation (guided fixes), all unified across AI and your entire data estate in a single platform. AI-SPM is a feature within Relyance AI, not the platform itself.

Typical AI-SPM tools discover and classify AI assets. That's only the first step.

Watch: Track fast-moving sensitive data

What security leaders say

“AI is creating and moving data faster than any team can track. Only AI-native tools like Relyance AI can keep up with the discovery and enforcement loop.”

Chris Bender, CISO

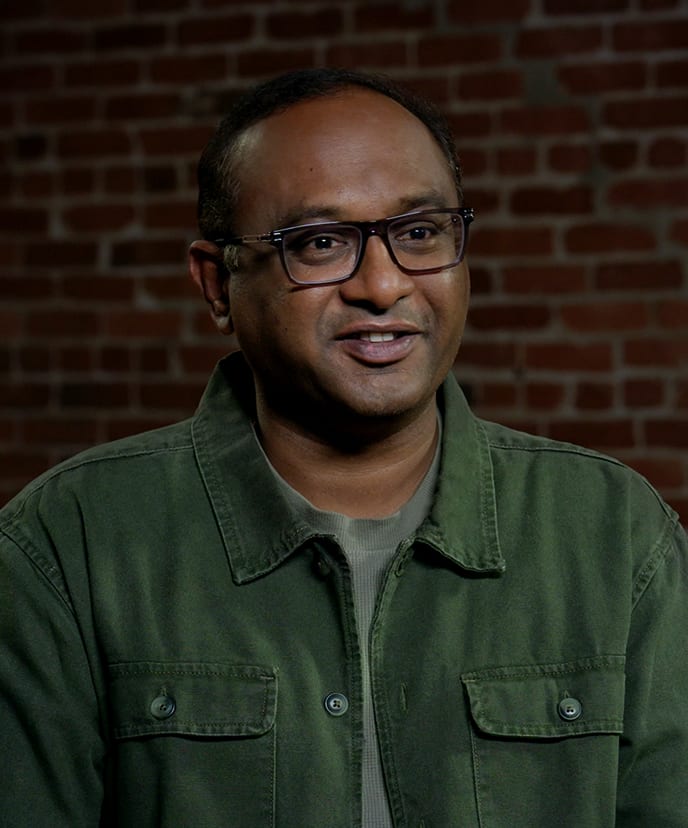

"Most tools show me a snapshot. Lyo™ gives me the full movie, around the clock. I can't afford to choose between innovation and security."

Karthik Chakkarapani, SVP & CIO

“Relyance gives us a complete, contextual understanding of our data landscape. We can see what we have, how it's used, who owns it, and where the risks are. This level of clarity is strengthening our overall security posture."

Jason James, CIO

FAQ

What is AI security?

AI security is the practice of protecting AI systems, the data they consume, and the actions they take from threats that exploit the unique characteristics of artificial intelligence. This includes defending against data poisoning, prompt injection, model theft, supply chain compromise, and overprivileged AI agent access. AI security is distinct from traditional cybersecurity because AI systems are data-dependent, non-deterministic, and increasingly autonomous.

What is AI security posture management (AI-SPM)?

AI-SPM is the practice of continuously discovering, assessing, and monitoring security risks across an organization's AI assets, including models, agents, data pipelines, and third-party AI tools. AI-SPM provides foundational visibility into your AI footprint. However, AI-SPM alone focuses on posture awareness, meaning what exists and how it's configured. It does not provide the context or remediation needed for complete AI security. Relyance AI treats AI-SPM as a capability within its broader data defense platform.

What is the difference between AI security and AI governance?

AI security protects AI systems from threats. AI governance is the framework of policies, oversight, and compliance controls that ensures AI is deployed responsibly. Security is defense. Governance is management. In practice, they depend on each other: governance without security is unenforceable, and security without governance is reactive. Relyance AI addresses both through a unified platform. For a full treatment of governance, see our definitive guide to AI governance.

What is compound risk in AI security?

Compound risk is a security vulnerability that forms when individually low-risk elements combine to create a lethal threat. For example, an AI agent alone may not be dangerous. A Snowflake table with customer PII may be properly secured. But an AI agent with administrative access to that table creates a critical vulnerability no individual scan would flag. Compound risks are invisible to tools that assess AI assets and data assets separately. Relyance AI detects compound risks by correlating identity, access permissions, and data sensitivity in real time.

What are the biggest AI security threats in 2026?

The most significant AI security threats facing enterprises in 2026 are data poisoning (corrupting training data to manipulate AI outputs), agentic AI risk (autonomous agents with overprivileged access acting as insider threats at machine speed), AI supply chain compromise (vulnerabilities in third-party models, MCP servers, and open-source components), prompt injection (manipulating AI agents into executing unauthorized actions), and identity exploitation (AI-generated deepfakes and non-human identity sprawl). The shift from generative AI to agentic AI has fundamentally expanded the attack surface because agents execute real-world actions, not just generate content.

What are MCP servers and why are they a security risk?

MCP (model context protocol) servers connect AI agents to external services like databases, cloud platforms, SaaS tools, and APIs. They are becoming the standard mechanism for AI agent interaction with external systems. Each MCP server is a trusted channel that grants AI agents access to sensitive resources. A compromised or misconfigured MCP server can give an attacker direct access to enterprise data through a channel that security tools implicitly trust. Relyance AI provides automated discovery and continuous risk assessment for third-party MCP servers across the enterprise.

How is Relyance AI different from Wiz AI-SPM or Palo Alto Prisma Cloud AI-SPM?

Wiz and Palo Alto Networks offer AI-SPM as a feature within their cloud-native application protection platforms (CNAPPs). Their approach starts from cloud infrastructure posture and adds AI asset discovery on top. Relyance AI takes a data-first approach—starting from the data journey and mapping AI risk in the context of what data is flowing, who or what is accessing it, and why. This surfaces compound risks that cloud-centric tools miss because they don’t map the relationships between AI agents and the sensitive data they access. This surfaces compound risks that cloud-centric tools miss because they do not map the relationships between AI agents and the sensitive data they access. Relyance also delivers contextual remediation and autonomous policy monitoring, not just posture alerts.

How does Relyance AI detect shadow AI?

Relyance AI continuously discovers AI assets across your environment through agentless, API-first scanning across code repositories, CI/CD pipelines, cloud runtime, SaaS applications, and third-party integrations. This includes first-party models, third-party AI tools, AI features embedded within SaaS vendor applications, and unsanctioned AI tools adopted by teams without IT approval. Each discovered asset is mapped to the data it accesses, the permissions it holds, and the risk it introduces.

What compliance frameworks does the AI security expert support?

Relyance AI maps AI assets and data flows to obligations under the EU AI Act, ISO 42001, NIST AI RMF, GDPR, SOC 2, and sector-specific regulations including SOX, HIPAA, and NIS2. The platform continuously detects potential compliance gaps across first-party, third-party, and SaaS vendor AI and generates audit-ready evidence in seconds.

Does Relyance AI require agents to deploy?

No. Relyance AI is fully agentless and API-first, designed for petabyte-scale enterprise environments. Deployment options include full SaaS (managed cloud for rapid deployment), InHost (runs entirely within your VPC), and DirectConnect (private link between your network and the platform).

Who is Relyance AI built for?

Relyance AI is built for CISOs, information security teams, data engineering teams, and AI governance leads at mid-size and enterprise organizations deploying AI agents and tools at scale. It is designed for companies running AI in production across cloud services, databases, and enterprise applications that need unified visibility and control over what AI systems can access and do.

You may also like

Privacy in the trenches: What we learned (and what we laughed about) at IAPP Global Summit

RSAC™ 2026 Conference recap: Securing AI starts with understanding your data