I’m waiting for my flight home from the Gartner Data and Analytics Summit at the beautiful Gaylord Palms in Orlando, Florida...with efficiency and food that would make Las Vegas blush, as well as a 5000+ person conference where attendees could stress out about stay on top of topics like AI project business value, governance, architecture, and change management. Having a 19-year history in Data and Analytics, I felt right at home. I even ran into some former colleagues and a business school classmate on the exhibitor floor (great seeing you, Justin!).

We had wonderful conversations with practitioners from Seattle to Singapore, representing industries like manufacturing, insurance, banking, retail, and all levels of government. Most organizations told us their executive teams were requiring them to figure out how to be more efficient, build products faster, and serve internal and external customers better via the use of AI.

Organizations are chock full of AI engineers - citizens and experts - excited to build new stuff. What is keeping governance and security teams up at night are questions like:

- All these AI Agents are running (or going to run) amok on our data. How do we make sure they don't create security risks without slowing down their development?

- How do I make sure we don't run afoul of laws like the EU AI Act?

- How do I make sure my vendors aren't working with 3rd parties that put me at risk?

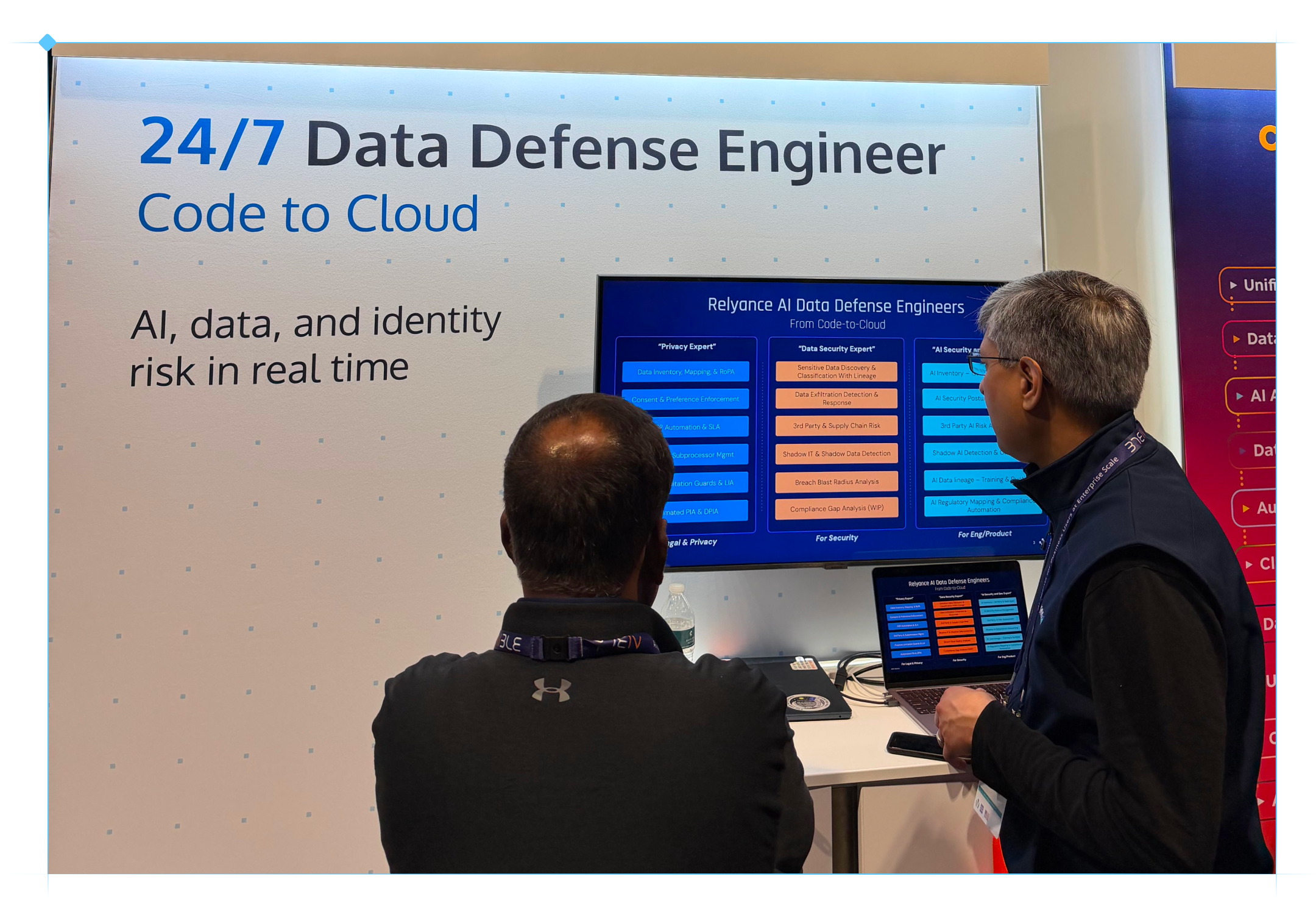

The good news is that governance teams now have an AI-powered platform in Relyance AI to help with these questions.

Over the past year, we've extended our leadership in data classification - and especially in creating visibility into data flows - by adding integrations to model repos such as Google Vertex and Amazon Bedrock and improving our source code and APM scanning functionality. That means we can help our customers understand what data is being fed to AI models, what unsanctioned ("shadow AI") models are running, and what human and nonhuman (inhuman?) identities are creating security, privacy and compliance risks. You have to know the risks to actually fix them.

The key to illuminating these risks and understanding how to fix them is the ability to scan storage, applications, code repos and log systems so we know what sensitive data is accessible to people, groups and agents and how it is being processed. For example, was a particular service or agent given access to social security numbers - even for a few seconds - in error? Are they able to modify the data or send it outside the organization or to an unsecure location?

We have been scanning and analyzing data from storage, applications, code repos and log files for 5+ years, and have been refining our approach over time. We are maximizing the quality and actionability of the risk insights that we provide. And yes, we are using AI to help us do it. For organizations who are not sure how to govern the AI onslaught, we're here to help.

You can learn more about our AI Governance platform and try it free for 30 days!