AI is no longer just a tool your teams use. It's an autonomous participant in your infrastructure. AI agents reason, plan, and execute actions across your systems with minimal human oversight. They access databases, trigger workflows, call APIs, and make decisions at machine speed.

This changes everything about security.

Traditional cybersecurity was built to protect networks, endpoints, and applications from external threats. But when the threat is an overprivileged AI agent with access to your most sensitive data, or poisoned training data silently corrupting every decision your models make, the old playbook breaks down. You’re not just defending against external attackers. You’re defending against your own systems behaving in ways no one scripted. This guide provides the strategic and technical foundation enterprise security teams need to understand, prioritize, and manage AI security risk in 2026 and beyond.

What you'll learn:

- Why AI security is fundamentally different from traditional cybersecurity and AI governance

- The threat landscape: data poisoning, agentic AI risk, supply chain compromise, prompt injection, and identity exploitation

- How to build an AI security program from inventory to continuous monitoring

- What AI security posture management (AI-SPM) is and why it's necessary but not sufficient

- Frameworks, regulations, and standards shaping AI security requirements

- How to evaluate AI security tools and why scanners alone aren't enough

What is AI security?

AI security is the discipline of protecting AI systems, the data they consume, and the actions they take from threats that exploit the unique characteristics of artificial intelligence.

It goes beyond traditional application security in three fundamental ways.

First, AI systems are data-dependent. A traditional application follows deterministic code. An AI system follows data. If the data is compromised, the behavior changes, often invisibly. This makes data integrity a first-order security concern, not just a compliance checkbox.

Second, AI systems are non-deterministic. The same input can produce different outputs depending on training data, model state, and context. This unpredictability means you cannot rely on signature-based detection or rule-based security controls alone.

Third, AI systems now have agency. Agentic AI doesn’t just generate content—it executes actions: querying databases, calling APIs, modifying records, and triggering workflows autonomously. A compromised or misconfigured AI agent isn’t just a data leak—it’s an insider threat operating at machine speed inside your infrastructure.

AI security addresses these realities across the entire AI lifecycle: how models are trained, how data flows into and out of AI systems, how agents access resources, how third-party AI components introduce risk, and how organizations detect and respond to AI-specific threats.

AI security vs. AI governance vs. traditional cybersecurity

These three disciplines are deeply connected but address different problems. Understanding the distinction is critical to building an effective program.

Traditional cybersecurity protects networks, endpoints, applications, and data from unauthorized access, theft, and disruption. It assumes deterministic systems: software does what code tells it to do, and threats come primarily from external actors.

AI governance is the framework of policies, processes, oversight, and compliance controls that ensures AI is developed and deployed responsibly. It addresses bias, fairness, transparency, accountability, and regulatory compliance. For a comprehensive treatment, see our definitive guide to AI governance.

AI security protects AI systems themselves from threats that exploit AI-specific attack surfaces: the data that instructs models, the agents that execute actions, the supply chains that deliver components, and the identities (human and non-human) that interact with AI infrastructure. It answers the question traditional cybersecurity wasn't designed to ask: what happens when your own systems behave in ways no one scripted?

In practice, these disciplines must work together. Governance without security is an unenforceable policy. Security without governance is reactive firefighting. And traditional cybersecurity without an AI-specific layer is blind to the fastest-growing category of enterprise risk.

The AI security threat landscape in 2026

The shift from generative AI to agentic AI has fundamentally expanded the enterprise attack surface. Here are the threat categories security teams must understand and address.

Data poisoning

Data poisoning is the silent corruption of training data to manipulate AI behavior. Adversaries inject malicious data points into training sets to create hidden backdoors, bias outputs, or cause targeted misclassification. Unlike traditional data breaches that steal information, data poisoning corrupts the intelligence that drives every decision your AI makes.

This is a paradigm shift. The traditional perimeter is irrelevant when the attack is embedded in the data itself. The people who understand the data (developers and data scientists) and the people who secure the infrastructure (the security team) often operate in separate worlds. This organizational silo creates the exact blind spot that data poisoning exploits.

Detection requires continuous monitoring of data flows into AI systems, understanding the full data journey from source to model, and the ability to trace anomalies back to their origin.

Agentic AI risk

AI agents are always on, highly privileged, and implicitly trusted. They execute actions autonomously: sending emails, modifying records, querying databases, triggering workflows. An improperly configured agent isn't just a vulnerability. It's a potential insider threat operating at machine speed.

The core risks include overprivileged access (agents with broader permissions than their function requires), lack of human oversight for high-impact actions, the inability of traditional SIEM and EDR tools to distinguish malicious agent behavior from normal operations, and cascading failures where one compromised agent propagates actions through interconnected systems.

As enterprise AI agent deployments scale in 2026, securing agent identity, access, and behavior becomes as critical as securing human users, arguably more so, because agents never sleep, never take breaks, and can execute thousands of actions per minute.

AI supply chain compromise

The modern AI stack is built on layers of third-party components: open-source models, pre-trained weights, datasets, frameworks, tools, plugins, and increasingly, MCP (model context protocol) servers that connect AI agents to external services.

Each component is a potential entry point. Vulnerabilities can be embedded in datasets, hidden in model weights, or introduced through compromised tooling. Many development teams adopt these components without the same security vetting applied to traditional software dependencies. The result is an expanding supply chain attack surface that is difficult to audit and nearly invisible once compromised.

Third-party MCP servers are a particularly acute risk in 2026. They provide AI agents with access to external services (databases, cloud platforms, SaaS tools), and a compromised MCP server can grant an attacker direct access to sensitive data through a trusted channel.

Prompt injection and manipulation

Prompt injection attacks manipulate AI agents into executing unauthorized actions by embedding malicious instructions in user inputs, documents, or data the agent processes. Unlike traditional code injection, prompt injection exploits the fundamental architecture of language models: they process instructions and data in the same channel.

Direct injection inserts malicious prompts into user input. Indirect injection hides instructions in content the agent retrieves (emails, documents, web pages). Both can cause agents to exfiltrate data, bypass access controls, or execute actions the user never intended.

As agents gain access to more tools and more sensitive data, the impact of successful prompt injection grows from nuisance to critical security event.

Identity exploitation and AI-generated deception

AI has made identity the primary battleground of enterprise security. Deepfake technology can now generate convincing real-time video and audio of executives. AI-generated phishing is personalized, grammatically perfect, and increasingly indistinguishable from legitimate communication.

At the same time, the concept of "identity" itself is expanding. Non-human identities, including AI agents, service accounts, and automated workflows, now outnumber human users in many enterprises. Each non-human identity carries access rights, and many are overprivileged. The intersection of AI-generated deception and non-human identity sprawl creates a compounding risk that traditional identity and access management was not designed to handle.

Building an AI security program

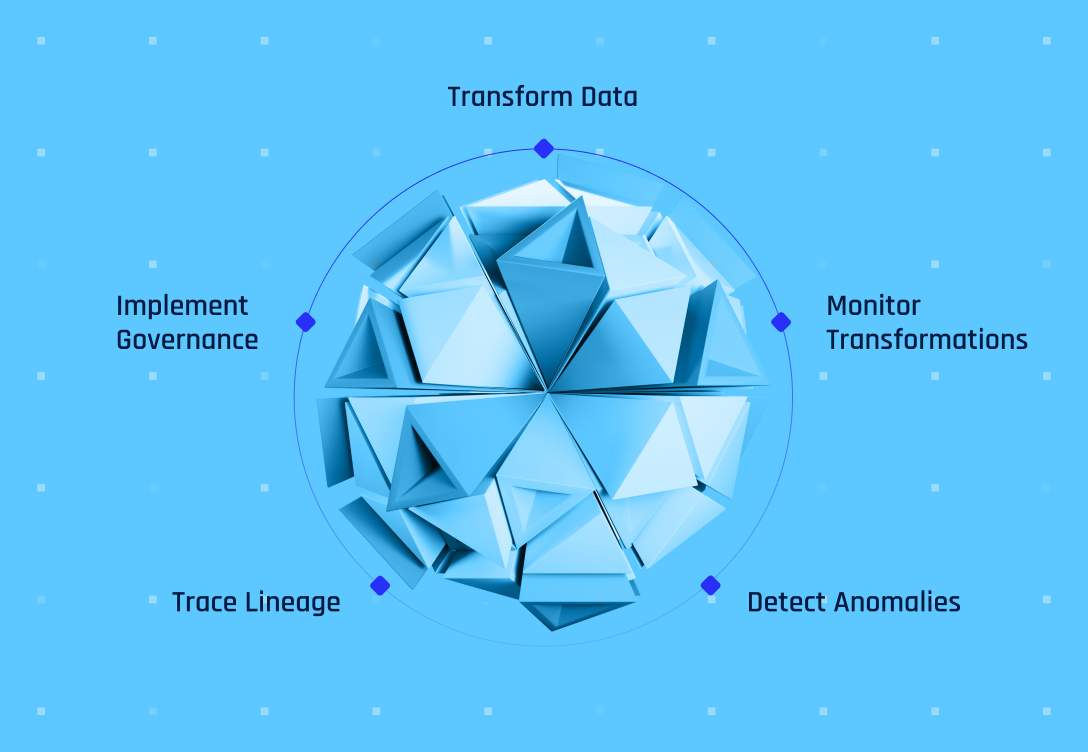

An effective AI security program requires four layers, each building on the one before it.

Layer 1: Visibility. Know what you have.

You cannot secure what you cannot see. The first step is a comprehensive inventory of all AI assets across your organization: models in production, AI agents, MCP servers, third-party AI tools, shadow AI adopted without IT approval, and the data flows that connect them all.

This is where AI security posture management (AI-SPM) begins. But inventory alone is not enough. You need to understand the relationships between AI assets and data assets, which agents have access to which data, and what permissions they carry. An AI agent alone may not be dangerous. An AI agent with administrative access to a database containing customer PII is a compound risk that only becomes visible when you map the relationships.

Layer 2: Context. Understand what it's doing and why.

Discovery tells you what exists. Context tells you the story. For every AI asset and data flow, you need to understand the business purpose, the legal and contractual obligations, the identity behind the access, and the risk profile.

Without context, every finding looks the same priority. With context, you can distinguish between an AI agent legitimately accessing anonymized data for analytics and an AI agent with overprivileged access to production PII for no documented reason. Context is what turns a security alert into an actionable risk decision.

Layer 3: Enforcement. Prevent the incident.

Monitoring without enforcement is just documentation of failure. An effective AI security program applies guardrails proactively: policy-based controls on AI agent access, automated remediation of misconfigurations, real-time blocking of unauthorized data flows, and continuous enforcement at machine speed.

This is the layer that distinguishes a mature AI security program from a posture management dashboard. Detection tells you something is wrong. Enforcement prevents the damage.

Layer 4: Prediction. Anticipate what's next.

The most advanced AI security programs don't just respond to threats. They model them. Pre-production threat simulation tests how AI systems behave under adversarial conditions. Post-production blast radius analysis maps the potential impact of a compromise before it propagates.

This predictive layer transforms security from a reactive function into a strategic capability that enables the business to deploy AI faster and more confidently.

What is AI security posture management (AI-SPM)?

AI-SPM is the practice of continuously discovering, assessing, and managing security risks across an organization's AI assets. It typically covers AI asset inventory and discovery, configuration and access analysis, policy compliance monitoring, and risk scoring and prioritization.

AI-SPM is a necessary capability. It provides the foundational visibility that every AI security program requires. But it is important to understand what AI-SPM is and what it is not.

AI-SPM in its traditional form focuses on posture awareness: what AI assets exist, how they are configured, and whether they meet policy requirements. This is discovery and classification. It is the first step, not the destination.

A complete AI security program requires context (understanding the business purpose, legal obligations, and identity relationships behind each AI asset), enforcement (proactive guardrails and automated remediation, not just alerts), prediction (threat modeling and blast radius analysis), and unified visibility across AI and non-AI data assets together, because AI risk doesn't exist in isolation from data risk.

This is why Relyance AI treats AI-SPM as a capability within its data defense platform, not a standalone product. Posture management answers “what do I have?” Lyo™, your data defense engineer, answers “what is it doing, why, and what should I do about it?”

Regulatory landscape for AI security

AI security requirements are embedded across multiple regulatory frameworks, even when they don't use the term "AI security" explicitly.

EU AI Act

The EU AI Act imposes specific security obligations on providers of high-risk AI systems, including robustness against errors and adversarial attacks (Article 15), data governance requirements for training data quality and integrity (Article 10), logging and traceability requirements (Article 12), and human oversight mechanisms (Article 14). Compliance deadlines for general-purpose AI models began in August 2025, with high-risk system obligations phasing in through 2026.

NIST AI Risk Management Framework

The NIST AI RMF provides a voluntary framework for managing AI risk across four functions: Govern, Map, Measure, and Manage. While not a regulation, it is the most widely referenced AI risk framework in the United States and serves as a practical foundation for building AI security programs. For a detailed breakdown of the framework, see our AI governance guide.

Sector-specific requirements

Financial services, healthcare, and critical infrastructure sectors face additional AI security requirements through existing regulations (SOX, HIPAA, NIS2) that are being interpreted to cover AI systems. In 2026, board-level accountability for AI risk is becoming the norm, with emerging roles like Chief AI Risk Officer reflecting the escalating governance expectations.

The convergence of security and governance

The regulatory trend is clear: security and governance requirements for AI are converging. You cannot demonstrate governance compliance without security controls, and security controls need governance frameworks to be applied consistently. Organizations that treat these as separate programs will face increasing friction as regulatory expectations tighten.

How to evaluate AI security tools

The AI security tooling market is growing rapidly. Here's what to look for and what to watch out for.

What to look for

Complete visibility across the AI stack. The tool should discover AI assets across code, runtime, data stores, cloud services, third-party tools, and identities, not just one layer.

Data journey context. Discovery without context is just inventory. The tool should map relationships between AI agents and data assets, attach business purpose and legal obligations, and surface compound risk—not just individual findings.

Real-time continuous monitoring. Periodic scans create security gaps. AI systems change constantly. Monitoring must be continuous, not scheduled.

Contextual remediation. Alerts without guidance are noise. The tool should tell you why something is risky, what obligation it violates, and how to fix it.

Unified view of AI and data security. AI risk doesn't exist in isolation. An AI agent's risk profile depends on what data it accesses. Tools that separate AI security from data security create the same blind spots they claim to eliminate.

Agentless deployment. AI security tools should not require installing agents across your infrastructure. Agentless, API-first architectures deploy faster and scale better.

What to watch out for

Scanner-only tools. If the tool discovers and classifies but doesn't provide context, enforcement, or remediation, it's posture awareness, not security. Discovery is step one, not the destination.

Siloed AI-SPM products. Standalone AI-SPM tools that don't connect to your data security posture create fragmented visibility. Compound risks only become visible when AI and data assets are mapped together.

Periodic scan architectures. In the age of agentic AI, a tool that scans weekly or daily will miss critical changes. AI agents execute actions in milliseconds. Security monitoring must match that speed.

Lack of identity intelligence. If the tool can't map the relationship between AI agents and the data they access (including permission levels), it will miss the most dangerous compound risks.